While websites used to be made up of basic text, colours and hyperlinks, the majority are now colourful, photographic works of art. A complete about-face has been made since the first ever websites, which were made up of Times New Roman text, a few hyperlinks and more often than not a single header. Today, flashy, mobile-responsive websites seem to be the way forward, but increasingly sleek designs comes at a price – increased site size and loading times.

What can be done about this? The way forward is clearly not to go back to content only, design-minimal pages. Ultimately, it’s unlikely that we’ll ever be in a position to offer flashy websites without the increased download cost. The middle ground is to optimise all resources for the web.

Image Optimisation

A lot of impactful websites rely heavily on images, graphics and even background videos. These come at a cost to the user, as they have to be downloaded. Optimising these images can reduce the file size of the images which improves download speeds without compromising quality. Free image compression software such as Kraken.IO or Compressor.IO are available for optimising such images and there really is no excuse for not doing it.

As much as it pains me, take a look at this uncompressed image of a snow scene from the French Alps.

The size of this image is 128KB. Not exactly massive, but files of this size soon add up. Compressor.IO makes short work of optimising this image for web use by shaving 71% (91KB) off the file size, but how does it look?

This image is now 37KB. Can you tell the difference?

Minifying JavaScript and Stylesheets

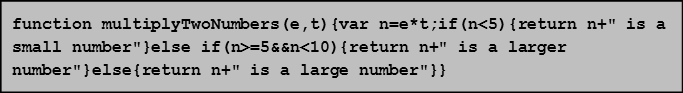

Minification of code is always good practice on a production server. Minification involves removing all unnecessary whitespace and comments, as well as, for clarity, replacing long variable names used in the code with shorter ones. Techniques like this are often applied to JavaScript, a programming language found in most websites. Take a look at the below code examples.

These two snippets are the same JavaScript function, but the lower one has been minified. The saving on this particular piece of code is 61.6%, just from changing variable names such as ‘firstNumber’ and ‘secondNumber’ to ‘e’ and ‘t’, and the variable called ‘multipliedNumbers’ to ‘n’. In theory then, once optimised the same code could be downloaded in less than half the time.

You might ask yourself if it’s worth the bother, especially considering that this piece of code is less than 1KB in size. Well, imagine that piece of code on a large, heavily-used site, such as that of a supermarket or online banking. If this piece of code were to be run 300 times an hour every hour, which is more than possible on a large site, the saving from the optimised code in an hour could be 80KB. In 24 hours, that figure would go up to just shy of 2MB of downloaded data. In a 30-day month, that becomes over 55MB. In a year? 676MB. All that data saved, just from removing characters that aren’t needed in the first place. By scaling up further, a single 100KB compressed JavaScript file can save gigabytes of unnecessary downloads each and every year.

There are a range of sites on the internet where you can manually minify your JavaScript and CSS code, but if you’re working with an MVC site, there are usually options within the project that allow automatic code compression. Either way, it’s more than worthwhile trimming the fat from your code.

Caching Data

From a heavier programming point-of-view, data can be cached in .NET to improve response times for the data to be displayed on page. Take for example, a blog page. The blogs are more than likely going to be stored in a database and when a user loads the page, this list of blogs is retrieved from the database, stored in a list and then displayed to the user however the developer wishes. But, how often are new blogs going to appear on the site? Realistically, at most once every couple of days, maybe even once a week, once a month or less. So, does it really make sense to be hitting the database with additional requests for the same data, when it is unlikely to have changed?

If the answer to that last question was no, then caching can be implemented. After the list of blogs is initially retrieved, they can be stored as a list in the cache, for a specified period of time. This time can be anything from seconds to hours, depending on the required refresh rate of the data. When the data is called a second time, the database will only be called for data when the cache for that particular item is empty, otherwise the list of data in the cache is used first. In some circumstances this can be a couple of hundred database requests saved by simply implementing a cache feature.

So just how good is my site?

To start to get a measure of how optimised your site is, look at Google Page Speed Insights. Enter your URL and allow Page Speed Insights to analyse your website for un-optimised images, un-compressed code and poor server response times. At the end of the analysis, a score between 0 and 100 is returned - the higher the score, the better the optimisation of the website.

A main reason why Google offer this service is due to page speed being one of their ranking factors. This means that every site that appears in their search results has its page speed checked and the resulting score will have an effect on its rank. It shouldn’t be a surprise that visitors like using quick loading websites, but search engines like to recommend them too. All this means that faster loading, better optimised sites can receive more traffic from organic search.

The Final Word

Optimising your website might seem pointless as you can’t see anything right away, but this time is definitely a good investment. Remember, visitors will form a first impression of your site within seconds, and you don’t want to get off to a bad start by making your users wait for images and functionality to download, do you?

Flickr Creative Commons Image: philippestanus